Create With Intention

Agentic engineering in practice

Jonas Juhl Nielsen · 2026

A full-day workshop on building real things with AI agents.

From sketch to ship.

Born

Passion

As a kid, I couldn't stop making

my own video games

(including playing them)

Career

Computer Graphic Artist

& Software Engineering

Sun Creature — Early

Pipeline Manager &

Technical Director

(Oscar-nominated Flee)

Sun Creature — Mid

Technical Producer &

Project Manager

Sun Creature — Late

Head of Production

& Technology

Crossroad

Founder, Animation Studio.

Fully freelance & remote based

Now

- Crossroad rebranding into Web

- Building Creativeshire (Webbuilder for freelancers)

- Freelance Full Stack Creative Developer

- Teaching

What To Expect

- Vibe Coding — just build. Describe what you want and iterate

- Prompt Engineering — encode your intention with structure, constraints, and precision

- Context Engineering — shape what the AI knows with persistent context and tools

- Agentic Engineering — orchestrate multiple agents into a coordinated system

With one throughline: intention.

Table of Content

Your Journey Today

Ch 1 — Vibe Coding

Getting Started · 09:15–10:30

- CONNECT: your experience with AI

- THEORY: what is vibe coding, why intention matters

- WATCH: Figma Make x Claude Code

- BUILD: meet your tool (Claude Code), then vibe-code anything from scratch

Break — 15 min

Ch 2 — Prompt Engineering

Taking Control · 10:45–12:00

- CONNECT: vibe coding — how did it go?

- THEORY: structured prompting, specificity, constraints, output formatting

- WATCH: Getting Started with Claude.ai

- BUILD: rebuild your Ch 1 project with structured prompts and proper tooling

Lunch — 1 hour

Ch 3 — Context Engineering

Building the Brain · 13:00–14:15

- CONNECT: prompt engineering — how did it go?

- THEORY: the agent loop, CLAUDE.md, SPEC files, skills, tool use, CLI & MCP

- WATCH: MCP & skills in action

- BUILD: architect your project (CLAUDE.md + SPEC files + skill.md), then one-shot rebuild

Break — 15 min

Ch 4 — Agentic Engineering

Building the System · 14:30–15:45

- CONNECT: context engineering — how did it go?

- THEORY: from one to many, subagents, multi-agent patterns, the human in the loop

- WATCH: multi-agent coordination

- BUILD: use subagents, coordinate a workflow, ship a feature

Break — 15 min

Chapter 1 — Vibe Coding

Getting Started

Your Experience With AI

Open discussion — share with the room

What Is Vibe Coding?

There's a new kind of coding I call "vibe coding", where you fully give in to the vibes, embrace exponentials, and forget that the code even exists.

— Andrej Karpathy, Feb 2025

Programming by conversation — describe what you want in plain language, and the AI writes the code.

— General definition

The fastest path from idea to working software — no syntax required, just intention.

— Our definition

Why Vibe Coding Works

Speed over perfection

- Zero setup, zero planning — just start

- Great for exploring ideas fast

- But — you trade control for speed

Rapid prototyping

- You don't always know what you want — until you see it

- Generate, react, discover — each iteration strengthens your intention

Why Intention Matters

The intention is what makes what we build human.

Without intention

"Build me a house"

→ 1,000,000 possible outcomes

"Build me a lamp"

→ 1,000,000 possible lamps

You iterate on option #4,030,252

Low probability it matches what you actually wanted

The human is out of the loop — the result becomes generic

With intention

"Build me a brutalist concrete house with an inner courtyard"

→ 10 possible outcomes

"Build me a minimal Japanese paper floor lamp"

→ 10 possible lamps

You iterate on option #3, then option #2

Each step closer to your initial intention

The human stays in the loop — the result becomes yours

More Than Text

Each extra sense gets it closer to understanding what you want.

Human — 5 senses

- Sight

- Hearing

- Smell

- Touch

- Taste

AI — multi-modal

- Text (multi-language understanding)

- Images

- Video

- Audio

... growing

Watch

Figma Make x Claude Code

What Is Claude Code?

An AI agent that lives in your terminal. You instruct — it executes.

- Opens in your terminal — no IDE required

- You describe what you want in plain English

- It reads files, writes code, runs commands, fixes errors

- It does what you tell it to — coding is just one of the things it can do

It should have been called Claude Instruct.

Go.

25 minutes — vibe code anything from scratch

We regroup at 10:25

Assignment

- Build anything with your intention

- Describe what you want in plain language

- Don't plan — just talk

- Let the AI decide everything

- React, redirect, refine, or start over

Setup

- Install Node.js (v18+)

- Open a terminal

- Get an Anthropic API key

npm i -g @anthropic-ai/claude-codemkdir my-project && cd my-projectclaude

Using a different tool? That's fine — the theory is the same.

Need help? Ask your agent.

Break — 15 min

What's Next

You just proved you can build. But can you control it?

Next up — Prompt Engineering: Taking Control

Chapter 2 — Prompt Engineering

Taking Control

How Did It Go?

Open discussion — share with the room

What Is Prompt Engineering?

The process of writing effective instructions for a model, such that it consistently generates content that meets your requirements.

— OpenAI

A relatively new discipline for developing and optimizing prompts to efficiently build with large language models.

— DAIR.AI Prompt Engineering Guide

Prompt engineering is the art of encoding your intention so precisely that the AI has no room to guess.

— Our definition

Anatomy of a Good Prompt

- Specificity & Constraints — reduce the solution space

- Structured Prompting — clear sections & hierarchy

- Output Formatting — define the shape of the response

- Few-shot Examples — show, don't just tell

- Chain-of-thought — force step-by-step reasoning

- Role Prompting — assign expertise & perspective

> Make me a landing page> Make me a landing page for a coffee shop called Brew. Dark colors, works on phones, single page.> Make me a landing page for a coffee shop called Brew. Dark colors, works on phones, single page.

I need a hero section, a menu, and a contact form.> Make me a landing page for a coffee shop called Brew. Dark colors, works on phones, single page.

I need a hero section, a menu, and a contact form.

Put everything in one HTML file. Keep it simple, no frameworks.> Make me a landing page for a coffee shop called Brew. Dark colors, works on phones, single page.

I need a hero section, a menu, and a contact form.

Put everything in one HTML file. Keep it simple, no frameworks.

Here's a screenshot of the style I'm going for: [image attached]> Make me a landing page for a coffee shop called Brew. Dark colors, works on phones, single page.

I need a hero section, a menu, and a contact form.

Put everything in one HTML file. Keep it simple, no frameworks.

Here's a screenshot of the style I'm going for: [image attached]

Think through the layout before you start writing code.> You're a frontend developer who cares about clean, readable code.

Make me a landing page for a coffee shop called Brew. Dark colors, works on phones, single page.

I need a hero section, a menu, and a contact form.

Put everything in one HTML file. Keep it simple, no frameworks.

Here's a screenshot of the style I'm going for: [image attached]

Think through the layout before you start writing code.Comparing Prompts

| Vague | Intentional |

|---|---|

| "Fix this bug" | "This function returns undefined when the input is empty. Find the cause, fix it, and write a test." |

| "Review this code" | "Review for SQL injection and missing input validation." |

| "Build a form" | "Build a registration form with email validation and inline error messages." |

When The Output Is Wrong

Map the symptom to the technique.

| Symptom | Fix |

|---|---|

| Too generic or vague | Add specificity & constraints |

| Messy or hard to use | Define output format |

| Missing nuance or edge cases | Add examples (few-shot) |

| Wrong approach or logic errors | Add chain-of-thought |

| Wrong tone or perspective | Assign a role |

Planning Before Execution

You know what to say. But before you say it — think about how to work.

Plan Mode

- AI explores the codebase

- Reads files, searches, asks questions

- Designs an approach

- Does not write code

Think of it as the architect phase.

Execute Mode

- AI writes code, runs commands

- Creates files, edits, tests

- Pushes to Git, creates PRs

- Acts on the plan

Think of it as the builder phase.

Plan → iterate → execute → review → fresh agent

Don't steer a sinking ship. Start a new one with a better plan.

Tooling

Tools are external capabilities the AI can call — file system, terminal, browser, APIs.

Built-in (CLI)

bash— run any command in your terminalread/edit/write— file operationsgrep/glob— search the codebasegh— push code, create PRs, manage issues

External (MCP)

- Figma — read designs, generate code

- Playwright — open a browser, test UIs

- Databases — query, migrate, seed

- Any API you can wrap as a server

Tools are what turn a chatbot into an agent. We'll go deep on MCP in Ch 3 & 4.

Watch

Getting Started with Claude.ai

Go.

25 minutes — rebuild your Ch 1 project with structured prompts

We regroup at 11:40

Assignment

- Same project as Ch 1, but more polished

- Start in plan mode — approve the plan — then execute

- When the output is wrong — diagnose and iterate

- Set up your repo with GitHub CLI

Prompt checklist

- Specificity & constraints

- Structure & hierarchy

- Output format

- Examples or references

- Chain-of-thought

- Role prompting

Need help? Ask your agent.

Lunch — 1 Hour

What's Next

Next up — Context Engineering: Building the Brain

Chapter 3 — Context Engineering

Building the Brain

How Did It Go?

Open discussion — share with the room

What Is Context Engineering?

The art of providing all the context for the task to be plausibly solvable by the LLM.

— Tobi Lutke, CEO of Shopify

Context engineering is the discipline of shaping what the AI sees — writing, selecting, compressing, and isolating context for each task.

— Sequoia Capital

A perfect prompt with zero context is still a guess. Context engineering is how you give AI the knowledge to get it right.

— Our definition

The Context Stack

Context is not one thing — it's layers. Some are always present, others load on demand.

Context StackContext Stack

│

└─ System Prompt ← built into the toolContext Stack

│

├─ System Prompt ← built into the tool

│

└─ CLAUDE.md ← project root (you write this)Context Stack

│

├─ System Prompt ← built into the tool

│

├─ CLAUDE.md ← project root (you write this)

│ ├─ src/

│ │ └─ CLAUDE.md ← component rules

│ ├─ tests/

│ │ └─ CLAUDE.md ← test conventions

│ └─ docs/

│ └─ CLAUDE.md ← writing styleContext Stack

│

├─ System Prompt ← built into the tool

│

├─ CLAUDE.md ← project root (you write this)

│ ├─ src/CLAUDE.md

│ ├─ tests/CLAUDE.md

│ └─ docs/CLAUDE.md

│

└─ User Prompt ← your messageContext Stack

│

├─ System Prompt ← built into the tool

│

├─ CLAUDE.md ← project root (you write this)

│ ├─ src/CLAUDE.md

│ ├─ tests/CLAUDE.md

│ └─ docs/CLAUDE.md

│

├─ User Prompt ← your message

│

└─ Tool Results ← lazy-loaded

├─ file reads

├─ search results

└─ web fetchesContext Stack

│

├─ System Prompt ← built into the tool

│

├─ CLAUDE.md ← project root (you write this)

│ ├─ src/CLAUDE.md

│ ├─ tests/CLAUDE.md

│ └─ docs/CLAUDE.md

│

├─ User Prompt ← your message

│

├─ Tool Results ← lazy-loaded

│ ├─ file reads

│ ├─ search results

│ └─ web fetches

│

└─ Conversation History ← prior messagesHow it stacks

- System Prompt — built into Claude Code by Anthropic. You don't write this.

- CLAUDE.md — the highest layer you control. Persistent project context, loaded at start

- Subfolder CLAUDE.md — scoped rules, lazy-loaded when the AI enters that directory

- User Prompt — your per-message instruction

- Tool Results — context the AI gathers on demand

- History — everything said so far

Not everything is loaded at once — context is lazy-loaded as needed.

Your Codebase Is the Context

Your codebase, way more than the prompt, is the biggest influence on AI's output.

- Files & folder structure are the map

- Tests, types & linting are the feedback loop

- Design patterns & domain modeling guide AI decisions

Software quality matters more than ever.

The Architect vs The Agent

You see architecture. The AI sees tokens.

You — The Architect

You know how the pieces connect.

AI — Fresh Agent

No memory. No map. Every session starts from zero.

Inside a Session

What happens when an agent starts a session?

The Bottleneck

Most agent failures aren't model failures — they're context failures.

Prompt engineering → one message

Context engineering → a system

The Agent Loop

Every agent follows the same cycle.

1. Read — observe the current state (files, errors, output)

2. Plan — decide what to do next

3. Act — call a tool (write code, run a command, fetch data)

4. Observe — check the result — did it work?

Then repeat. The loop is what makes an agent an agent.

This is why agents can self-correct — they see their own mistakes and try again.

Spec Files

Define what each piece does — and how they connect.

The Context File Hierarchy

your-project/

└─ ...

~/.claude/

└─ ...your-project/

└─ CLAUDE.md

~/.claude/

└─ CLAUDE.md ← personal, per employeeyour-project/

├─ CLAUDE.md

└─ .claude/

└─ specs/

├─ feature-a.spec.md

└─ feature-b.spec.md

~/.claude/

└─ CLAUDE.md ← personal, per employeeyour-project/

├─ CLAUDE.md

└─ .claude/

├─ specs/

│ ├─ feature-a.spec.md

│ └─ feature-b.spec.md

└─ skills/

├─ deploy.md

└─ test.md

~/.claude/

└─ CLAUDE.md ← personal, per employeeyour-project/

├─ CLAUDE.md

├─ src/

│ └─ CLAUDE.md ← scoped rules

├─ tests/

│ └─ CLAUDE.md ← test conventions

└─ .claude/

├─ specs/

│ ├─ feature-a.spec.md

│ └─ feature-b.spec.md

└─ skills/

├─ deploy.md

└─ test.md

~/.claude/

└─ CLAUDE.md ← personal, per employeeyour-project/

├─ CLAUDE.md

├─ src/

│ └─ CLAUDE.md ← scoped rules

├─ tests/

│ └─ CLAUDE.md ← test conventions

└─ .claude/

├─ specs/

│ ├─ feature-a.spec.md

│ └─ feature-b.spec.md

├─ skills/

│ ├─ deploy.md

│ └─ test.md

└─ settings.json ← tool permissions

~/.claude/

└─ CLAUDE.md ← personal, per employeeHow it stacks

- CLAUDE.md — project identity. Always loaded.

- SPEC files — feature blueprints. One per module.

- skill.md — reusable procedures. Your plugin library.

- Scoped CLAUDE.md — subfolder rules. Lazy-loaded.

- Settings — tool permissions, allowed commands, env variables.

CLAUDE.md in Practice

From the Claude Code team themselves.

Our team shares a single CLAUDE.md for the entire repo. We check it into Git, and the whole team contributes multiple times a week. Anytime Claude does something incorrectly we add it to the CLAUDE.md, so Claude knows not to do it next time.

— Boris Cherny, Claude Code team @ Anthropic

- Checked into Git — shared, versioned, reviewed like code

- Updated constantly — every mistake becomes a rule

- Compound engineering — every session gets smarter

Skills: Your Plugin Library

A skill is a prompt injection — a reusable procedure, loaded on demand when you invoke it.

# Simple skill — single file

.claude/skills/

└─ deploy.md ← one markdown file

You type: /deploy

Claude sees: the full contents of deploy.md

injected into the conversation# Full skill — folder with resources

.claude/skills/

└─ review-pr/

├─ skill.md ← the prompt (entry point)

├─ checklist.md ← loaded on demand

└─ examples/

├─ good-pr.md ← reference material

└─ bad-pr.md ← anti-patterns# Inside skill.md

---

description: Review a pull request

tools: [Bash, Read, Grep]

---

You are a code reviewer. Follow these steps:

1. Read the diff with `gh pr diff`

2. Read checklist.md and check each file against it

3. Flag security issues, missing tests

4. Look at examples/good-pr.md for tone & format

5. Post a structured review comment

# ↑ Sibling files aren't auto-loaded.

# The prompt tells Claude when to read them.

# This IS the prompt — injected when you

# type /review-prHow it works

- A skill is a prompt injection — markdown that becomes part of the conversation

- Can be a single file or a full folder — the prompt tells the agent when to read sibling files

- Can declare tools it needs (Bash, Read, web fetches, MCP tools...)

- Can include step-by-step procedures, examples, constraints — anything you'd tell a colleague

- Invoke with

/skill-name— write once, invoke forever

A role + instructions + tools, loaded just-in-time.

The Skills Marketplace

You don't have to write every skill from scratch.

Install community skills

# Browse & install from the registry

claude skill install @anthropic/review-pr

claude skill install @company/deploy-aws

claude skill install @community/migrate-db- Open ecosystem — anyone can publish

- Version-pinned, auditable, shareable

- The new standard for extending AI agents

Why this matters

- NPM for AI workflows — reusable, composable agent behaviors

- Your team can publish internal skills — onboarding, deploys, reviews

- Community-driven best practices become installable

- Skills use MCP & CLI — they're the instructions, not a replacement. Zero tokens until invoked.

Skills = what to do. MCP & CLI = the hands to do it with.

Tool Use

An agent without tools is just a chatbot. Tools are how it acts.

Built-in tools

Read— read filesWrite/Edit— create & modify codeBash— run shell commandsGrep/Glob— search the codebaseWebFetch— pull content from the webTask— launch subagents

The lifecycle

- Agent decides which tool to call

- Formats the request (name + parameters)

- System executes it

- Result feeds back into the agent loop

Every tool call is a turn in the loop. More tools = more capability.

CLI Tools

CLI = Command Line Interface — the agent's built-in hands.

- Shell commands:

git,npm,gh,curl, etc. - Output goes to disk — agent decides what to read

- Lean — only what the agent reads enters context

npm test

gh pr view 42

psql -c "SELECT count(*) FROM users"

fly deploy --app my-appIf it runs in your terminal, the agent can run it too.

MCP: Model Context Protocol

MCP = Model Context Protocol — a standard for connecting agents to external services.

Without MCP

- Agent can only read & write local files

- No access to databases, APIs, browsers

- You copy-paste between services

The agent is isolated.

With MCP

- Figma — read designs, generate code

- Supabase — query & modify databases

- Browser — navigate, click, screenshot

- Slack / GitHub — read & post messages

The agent can reach the real world.

MCP turns an LLM into a full-stack engineer with access to your entire toolchain.

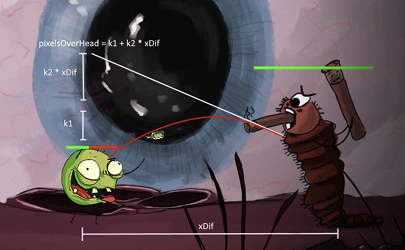

CLI vs MCP: The Trade-off

Both give the agent hands. They cost differently.

Same task. Same agent. MCP: 114K tokens. CLI: 26.8K.

— Playwright team benchmark

- MCP flows data directly into context — rich but expensive

- CLI saves to disk — agent picks what to read. Lean.

Prefer CLI when both can do the job.

Tips

Prompt Engineer Your Project Files

- Use prompt engineering to write your

CLAUDE.md,spec.md, andskill.mdfiles - Validate these files — read them, understand them, iterate on them

- Do NOT vibe code these — they are the entry point for every fresh agent. Know exactly what they say.

- Sub

CLAUDE.mdfiles can lazy-load specific relatedspec.mdfiles for that section of the codebase - Skills are prompts too — write them with the same care and intention as your CLAUDE.md

These files are your intention — expressed as instructions.

Watch

MCP & Skills in Action

Go.

30 minutes — architect your project's context, then one-shot rebuild

We regroup at 13:55

Assignment

- Create a

CLAUDE.mdfor your project - Write at least one

specfile - Write at least one

skill.md - Add at least one tool — MCP server, CLI command, or skill

- One-shot rebuild Ch 2 project

CLAUDE.md Checklist

- Project name & purpose

- Tech stack

- Architecture & how files connect

- Coding conventions

- Known mistakes & what to avoid

- Pointers to spec & skill files

Need help? Ask your agent.

Break — 15 min

What's Next

Your AI has a brain and hands. Can you make them work together?

Next up — Agentic Engineering: Building the System

Chapter 4 — Agentic Engineering

Building the System

How Did It Go?

Open discussion — share with the room

What Is Agentic Engineering?

"Agentic" because you are not writing code directly — you are orchestrating agents and acting as oversight. "Engineering" to emphasize there is an art and science to it.

— Andrej Karpathy

Agents plan and operate independently, potentially returning to the human for further information or judgement.

— Anthropic, "Building Effective Agents"

Context engineering gave it a brain. Agentic engineering is how you work together.

— Our definition

From One to Many

Why a single agent isn't enough.

One agent = one context window

One thread of thought. One task at a time. One set of files it can hold.

Complex tasks exceed one brain

Context fills up. Specialization is needed. Parallelism speeds things up.

The solution: a team

Multiple agents, each with their own brain, coordinating on a shared goal.

Solo developer → development team. Same shift, same reason.

Subagents

One agent can only do one thing at a time. Subagents unlock parallelism.

What they are

- Specialized agents launched by a parent

- Each gets its own context window

- Can run in parallel — fan-out pattern

- Results flow back to the orchestrator

When to use them

- Independent research tasks

- Exploring multiple approaches at once

- Keeping the main context clean

- Delegating to specialist roles

Think of it as a lead developer who delegates to juniors — each focused on their own task.

Multi-Agent Patterns

How agents work together.

Orchestrator → Specialists

One agent plans, delegates tasks to specialized agents, collects results.

Example: "Build this feature" → Research agent + Code agent + Test agent

Fan-out / Fan-in

Launch N agents in parallel, gather all results, synthesize.

Example: "Explore 3 approaches" → 3 agents → best solution wins

Pipeline

Agent A → Agent B → Agent C. Each transforms the output of the previous.

Example: Design → Implement → Review → Deploy

The Human in the Loop

You are the architect. The agents are the builders.

Your role

- Set the intention — what should be built and why

- Review agent output — trust but verify

- Course-correct — redirect when it drifts

- Decide — agents propose, you approve

The cycle

- Plan — describe the goal, set constraints

- Delegate — let agents execute

- Review — inspect the output

- Iterate — refine and repeat

Agents are fast. Your intention is what makes them good.

The Full Spectrum

Everything you learned today — in one picture.

Ch 1

Vibe Coding

Describe what you want. Iterate

Ch 2

Prompt Eng.

Structure, constraints, precision

Ch 3

Context Eng.

Persistent context and tools

Ch 4

Agentic Eng.

Multi-agent coordination

Each layer builds on the last. Together, they turn a chatbot into a collaborator.

Watch

Multi-Agent Coordination

Go.

30 minutes — coordinate agents and ship a feature

We regroup at 15:25

Assignment

- Use subagents to break down a complex task

- Coordinate a multi-step workflow across agents

- Ship a real feature end-to-end

- Reflect: what did the agents do that you didn't expect?

Ideas

- Fan-out: explore 3 approaches, pick the best

- Pipeline: design → implement → test → deploy

- Specialist: one agent researches, another builds

- Parallel: multiple features at once

Tip: Task tool launches subagents. Plan mode coordinates.

Need help? Ask your agent.

Break — 15 min

What's Next

You've built the full stack — brain, hands, and system. Time to look back at what you made.

Next up — Takeaways and the Bonus round.

Create With Intention

What you built today

- Vibe Coding — you got your hands dirty and proved you can build

- Prompt Engineering — you learned to communicate with precision and professional tooling

- Context Engineering — you gave the AI a persistent brain, hands, and a toolkit

- Agentic Engineering — you coordinated multiple agents working together to ship real features

The tool is fast. Your intention is what makes it good.

Now go build something Monday.

Bonus — Advanced Topics

For those who want to go deeper.

Stochastic vs Deterministic

Scripts are deterministic — same input, same output. Every time.

Agent outputs are stochastic — same prompt, different result. Every time.

So how do we enforce specific outcomes?

Hooks

Deterministic guardrails for stochastic agents.

Adding rules to CLAUDE.md reduces the chance of bad behavior — but doesn't prevent it.

Every instruction burns your instruction budget.

Hooks run deterministic code at key points in the execution cycle:

pre-tool-use— before a tool call executes (can block it)post-tool-use— after a tool call completessession-start— when a session begins

Hooks in Practice

Move rules from CLAUDE.md → deterministic hooks.

Before (stochastic)

# CLAUDE.md

**Always use `pnpm`, not `npm`.**

**Never run `git push`.**Burns instruction budget. Still just a suggestion.

After (deterministic)

# .claude/settings.json

"hooks": {

"pre-tool-use": [{

"matcher": "Bash",

"command": "./hooks/block-npm.sh"

}]

}Zero instruction budget. Impossible to bypass.

Take instructions out of your instruction budget and enforce them deterministically.